The AI agent you demoed in a notebook is not the agent you’ll ship. What determines whether it’s fast, compliant, affordable, and observable isn’t the model name on your slide—it's the inference layer you pick and how you design around it.

In the last few weeks, Cloudflare pitched an inference layer “designed for agents,” pushing compute to the edge. OpenAI expanded desktop-control capabilities, making agents that can use your computer in the background feel uncomfortably real. Mozilla unveiled a client focused on self-hosted infrastructure. Meanwhile, open models like Qwen3.6-35B are becoming agent-capable. Translation: the platform decision is now the product decision.

If you’re a CTO, you have to choose a lane. Here’s a pragmatic framework to do it.

The real choice isn’t “which model?” It’s “where and how do we run it?”

Benchmarks and leaderboards are noisy. What matters in production is latency budget, data control, cost stability, and operability. An agent that feels snappy at 250 ms p50 and 800 ms p95 with a clean audit trail will beat a slightly “smarter” agent that takes 2.5 s and sprays secrets into logs.

Define your agent capability tier (and implied risk)

- Tier 0: Chat and RAG. Stateless Q&A. Minimal tool use. Low blast radius.

- Tier 1: Function calling and API orchestration. Writes to internal systems behind strict IAM.

- Tier 2: Browsing, file I/O, and code execution in a sandbox. Medium blast radius.

- Tier 3: Desktop or cloud console control (e.g., shell, GUI automation). High blast radius, needs isolation and recording.

- Tier 4: Autonomous loops with background tasks and scheduling. Highest blast radius. Treat like a privileged service account with brakes.

Your inference layer choice should follow the highest tier you intend to support in the next two quarters—not the demo you ran last week.

A seven-question decision framework

1) What’s your latency budget and where are your users?

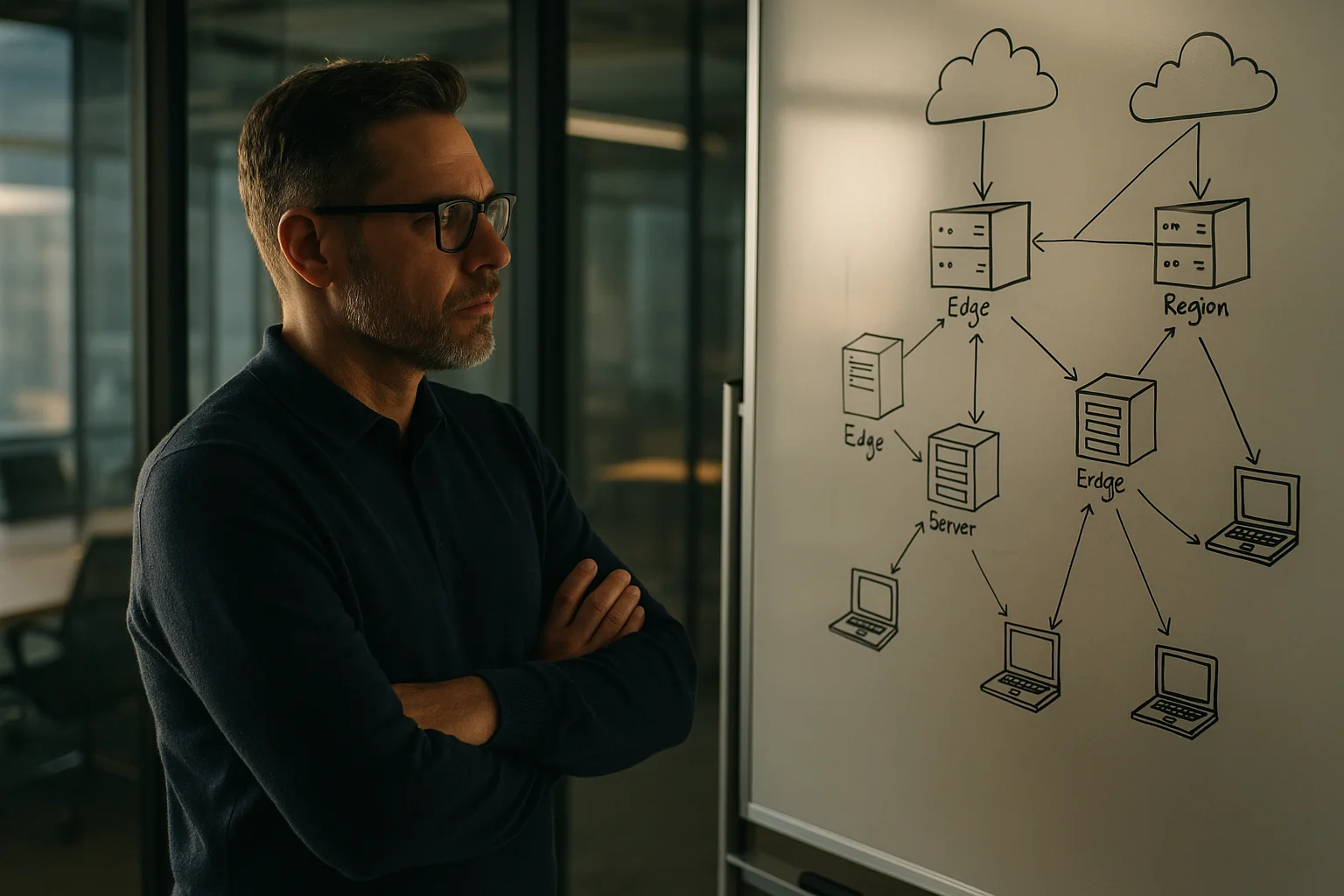

- Edge inference (e.g., running on a global network) can shave 50–150 ms by eliminating long-haul hops and cold-starts for chat+RAG experiences.

- Regional inference (AWS, GCP, Azure) adds 30–120 ms per call depending on peering and VPC setup. Often preferable for backoffice workflows.

- On-device can hit 30–80 ms for small models but sacrifices capability and consistency; great for privacy-heavy or occasional tasks.

Rule of thumb: if your product feature must feel instantaneous (p95 under 1 s) and users are globally distributed, favor edge or a split architecture with an edge router, local embeddings/rerankers, and a regional “brain.”

2) What data do you touch, and where must it live?

- PHI, PCI, or strict PII policies push you toward VPC-hosted inference or providers with enforceable data residency and no training on inputs.

- Data minimization and tokenization at the gateway reduce risk and vendor lock. Redact before inference, not after.

- For EU/LatAm residency, plan for dual deploys. Cross-border hops will kill both latency and compliance narratives.

3) What tools will the agent use, and how do you sandbox them?

- Function calling against internal services demands signed requests, per-tool allowlists, and budget caps per turn.

- Desktop or console control requires ephemeral VMs/microVMs, session recording, and per-task credentials with tightly scoped RBAC.

- Web browsing needs safe renderers and content sanitization; do not let an agent execute arbitrary JavaScript in your prod network.

4) What SLA and vendor risk can you tolerate?

- Public endpoints from closed providers deliver top-tier models but can throttle or change terms. Have a fallback model and a routing layer.

- Edge networks can be resilient but add a dependency on POP availability and vendor features. Validate backlog of supported models and upgrade cadence.

- Self-hosting buys control but moves uptime on-call to your team. Budget SRE hours. No free lunch.

5) Can you make unit economics converge?

Work backwards from a task: average 300 input tokens, 800 output tokens, 2 function calls. Multiply by your monthly task volume. Then build two scenarios:

- Premium closed model for cognition + cheap open reranker/embedding at edge.

- All-open stack fine-tuned/LoRA’d for your domain.

We routinely see 20–40% cost swings based on how much you offload to rerankers and how aggressively you batch or cache embeddings. Also account for vector DB egress; keeping rerank and vector search co-located with inference avoids dumb network bills.

6) How will you observe, eval, and rollback?

- Every agent step should emit spans with tool, tokens, latency, and cost. Sample raw prompts/completions with redaction.

- Run nightly synthetic evals on a fixed corpus. Track success rate and hallucination deltas per model version.

- Make models a config toggle. Ship with a kill switch, and rehearse rollbacks like you rehearse incident response.

7) What’s your escape hatch?

- Keep prompts, tool schemas, and guardrails provider-agnostic. Use an adapter layer so model swaps don’t hit product code.

- Maintain at least one open-source model path (vLLM/ollama) for continuity during vendor outages or pricing changes.

Your platform options, without the hype

Cloudflare Workers AI (edge) + Vectorize + KV/Queues

Cloudflare is explicitly positioning its AI platform as an inference layer for agents, with global POPs and tight integration across storage and networking. The practical upside: low cold-starts, fast tail latencies, and cheap intra-platform data movement.

- Strengths: 300+ POPs, close-to-user latency; easy composition with KV/Queues/Durable Objects; good fit for chat+RAG and lightweight tool use.

- Constraints: Model catalog depth varies; long-running or GPU-heavy jobs can be awkward; fine-tuning options are limited compared to hyperscalers. You accept vendor feature roadmap as a gating factor.

- Good fit: Product features where p95 under 1 s matters, and data sensitivity is manageable with redaction/tokenization.

Reference: see Cloudflare’s AI platform overview for current model support and architectural patterns (blog.cloudflare.com/ai).

AWS Bedrock + Lambda/ECS + VPC (regional)

Bedrock gives you a menu of high-quality closed and open models in your VPC boundary, with IAM and PrivateLink support. It’s boring in the best way.

- Strengths: Enterprise-grade IAM, CloudWatch, KMS; compliance story; co-location with your data sources; steady evolution (agents, guardrails).

- Constraints: Regional latency; cost opacity when mixing managed services; occasional model capacity constraints; you still need to design your own routing and evals.

- Good fit: Backoffice agents, PII/PHI workloads, internal automation where auditability trumps absolute latency.

OpenAI/Anthropic endpoints (managed cognition)

Top-tier reasoning models, strong function-calling, tool-use planning, and now increasingly assertive system capabilities like background desktop control.

- Strengths: Best-in-class reasoning and coding; frequent model improvements; easy to prototype; good tool-use semantics.

- Constraints: Vendor terms and rate limits; data control depends on settings and enterprise agreements; public internet hop and egress; you own sandboxing.

- Good fit: When reasoning quality dominates and you can mitigate data risk via redaction, or for “brain” calls behind an edge/router and open-model pre/post processing.

Reference: provider blogs announce rapid capability changes; plan for change control (openai.com/blog, anthropic.com/news).

Self-hosted open models with vLLM/Ollama on Kubernetes (VPC)

If data control and cost predictability beat raw IQ, self-host. vLLM gives you high-throughput serving; modern open models (Qwen, Llama, Mistral) are becoming very capable, especially with domain tuning and reranking.

- Strengths: Full data control; predictable cost per GPU hour; can run quantized models on smaller GPUs/CPUs for non-critical paths; avoids vendor lock.

- Constraints: You run SRE; model lifecycle and evaluation discipline are on you; sustaining state of the art needs ongoing tuning.

- Good fit: Regulated data, offline/air-gapped scenarios, or cost-sensitive high-volume RAG where 95th-percentile quality isn’t mandatory.

On-device (mobile/desktop)

With small, quantized models and increasingly capable mobile NPUs, on-device inference is real for narrow tasks (summarization, classification, client-side redaction, age checks).

- Strengths: Privacy, offline capability, lowest network latency, potential compliance wins when inference never leaves the device.

- Constraints: Limited model size and context; distribution and upgrade complexity; fragmented hardware acceleration.

- Good fit: Privacy-first features, pre/post-processing, gating tasks before server-side cognition.

Reference architectures that ship

1) Consumer feature: low-latency chat + RAG

- Edge router on Cloudflare (or similar) for request termination, redaction, rate limiting, and feature flags.

- Edge embeddings and rerankers for candidate selection from a co-located vector store. Cache aggressively.

- Brain calls to a regional premium model (OpenAI/Anthropic or Bedrock) only when needed. Short contexts, tool-guarded.

- Observability: span-level logging with token counts, p50/p95 per stage, and sampled transcripts with PII masked.

We’ve seen this trim median latency by 25–35% versus “region-only” designs, with a 15–25% reduction in LLM spend due to smarter pre-filtering.

2) Internal backoffice agent with sensitive data

- Run inference in your VPC (Bedrock or vLLM) behind an OIDC gateway. All tools require signed JWTs and per-scope IAM roles.

- Centralize secrets in your standard vault; never in prompts. Log all tool invocations to an append-only store.

- Use a policy engine to approve/deny actions above a risk threshold (e.g., payment > $1000 requires human confirmation).

- Nightly evals on a golden dataset; regression gates block new model versions that degrade beyond agreed margins.

Expect slightly higher latencies (p95 900–1400 ms), but strong auditability and data governance that passes external review.

3) Desktop/console automation (high blast radius)

- Never let the agent touch a real user desktop. Spin up ephemeral microVMs or containers with a disposable identity and per-run credentials.

- Use a two-tier brain: closed model for planning; open self-hosted model for iterative low-risk steps to control cost and avoid rate limits.

- Record the session (video + logs), cap runtime and token budgets, and require human approval for destructive actions.

- Run all downloads through a safe renderer and AV scan. Network egress locked to allowlists.

Yes, it’s heavier. But this is the difference between a controlled demo and a production system you can defend in an incident review.

Security controls that matter more than your model choice

- Redaction at the edge: Hash/email/SSN removal before prompts. Keep originals in a sealed store keyed by a token if you must rehydrate post-inference.

- Tool allowlists + budgets: Max N tool calls per turn, max spend per session, and per-tool scopes. Deny by default.

- Timeboxing: Hard timeouts and watchdogs on agent loops. No background forever-jobs without schedulers you control.

- Data lineage: Tag each artifact with model version, prompt hash, and tool chain. Make it possible to answer “what produced this?”

- Jailbreak tests in CI: Red-team prompts checked into your repo. Run them against each model upgrade before rollout.

Measuring success: KPIs that keep you honest

- Latency: p50/p95 per stage (embed, rerank, LLM, tools), not just end-to-end.

- Quality: Task success rate from nightly evals; factuality/hallucination rate on a labeled subset.

- Economics: Cost per successful action (not per token), cache hit rates, and vector queries per task.

- Reliability: Human handoff rate, auto-retry rate, and incidents per 10k tasks.

- Change safety: % of model upgrades rolled back, mean time to rollback.

What we’re seeing in the field

Across recent deployments, a few patterns are holding:

- Edge pre-processing + regional “brains” is a sweet spot for consumer UX. Expect 20–35% latency improvements and 10–25% LLM cost reductions when you offload classification/reranking to small edge models.

- Self-hosted open models are viable for 60–80% of backoffice agent tasks when combined with curated prompts, LoRA adapters, and a strict tool policy—freeing premium models for the hardest 20–40%.

- Agent sandboxes pay for themselves. Teams that moved desktop automation into microVMs slashed incident risk and cut mean time to investigate by over 50% thanks to deterministic replay and complete session logs.

None of this is theoretical. It’s the operational layer you’ll need if you want to graduate from a demo to a durable capability.

Plan for scarcity and change

A credible narrative on “the beginning of scarcity in AI” means capacity constraints and pricing will fluctuate. Do not anchor your product to a single model or provider. Build a small, boring router with adapter interfaces for prompts, tools, and guardrails. Keep at least one open-model path warmed up (even if it’s only for 10% of traffic). Your future self will thank you when rate limits or pricing change mid-quarter.

The punchline

Pick the inference layer that matches your latency and data profile, then design guardrails and observability like you’re building a payments system. If you do that, the model can change underneath you—and your product will keep working.

Key Takeaways

- Decide by topology and control, not model FOMO: edge for speed, VPC for sensitive data, hybrid for most real products.

- Define your highest agent tier (0–4) now; it determines sandboxing, IAM, and infra budget.

- Cloudflare edge is great for chat+RAG UX; Bedrock/self-hosted win for compliance and audit-heavy internal agents.

- Route premium cognition sparingly; push embeddings/rerankers and caches to the edge to cut both latency and cost.

- Make models a config flag. Keep an open-model escape hatch warm to handle outages and pricing shocks.

- Instrument spans, run nightly evals, and practice rollbacks. That’s how you keep quality from silently regressing.

- For desktop/console agents, isolate in ephemeral microVMs with recording and strict budgets. Anything less is a breach-in-waiting.