If you think idempotency is just an HTTP header, you’re already leaking money. Duplicate charges, double orders, phantom emails—these aren’t edge cases. They’re what happens in real networks with real users who hit refresh, real SDKs that auto-retry, and now real AI agents that redraft and resubmit requests. The recent HN thread “Idempotency is easy until the second request is different” captured the pain perfectly. As a CTO, you need idempotency that holds up when payloads drift, requests reorder, and downstream systems are only “eventually” cooperative.

Idempotency isn’t a feature flag. It’s a system property.

Idempotency means: applying the same logical operation multiple times results in the same effect, not merely the same HTTP status. Headers help, but they don’t solve races, partial side effects, or out-of-order delivery. Stripe keeps idempotency keys for 24 hours for a reason; AWS SQS FIFO’s 5‑minute dedupe window exists for a reason; Kafka’s “exactly-once” semantics are carefully scoped for a reason. The network will give you duplicates. Your code will, too.

Where duplicates actually come from

- Mobile clients and SDKs retry on timeouts, often 2–5 times with backoff. Users also hit refresh. You get two requests with different TCP connections and slightly different payloads (timestamps, nonces).

- Proxies and load balancers retry on 502/503/504. Your app might have processed the first request, but the response got dropped.

- Webhook senders (payments, logistics) will redeliver events until they see a 2xx. You will see out-of-order deliveries and duplicates—guaranteed.

- AI agents amplify retries. Tool-use loops will “adjust” parameters and resubmit near-identical mutations.

If you don’t make idempotency a first-class architectural concern, you’re choosing chargebacks and support tickets.

Define the operation, not the endpoint

Idempotency only makes sense when attached to a logical operation. “Create order #123 for account A with items X at price P” is an operation. “POST /orders” is not. Model the operation boundary first:

- Actor: Who is allowed to do this? (user, account, service)

- Target: Which resource is being mutated?

- Intent: What state transition do we expect? (cart → placed, hold → captured)

- Effect: What irreversible side effects are triggered? (charge, email, inventory decrement)

Your idempotency key should map to this logical unit, not just a request. You can’t dedupe correctly if you don’t know what “same” means.

A CTO decision framework: seven choices you can’t defer

1) Scope your keys: who and what does the key belong to?

- Per actor + operation: user_id + operation_type + client_supplied_key. Prevents cross-tenant key collisions.

- Per target resource: For updates like “set shipping address,” use resource_id + version (optimistic concurrency) rather than free-form keys.

- Per workflow stage: “authorize” vs “capture” should not share the same keyspace.

2) Storage and TTL: how long do you remember?

- Postgres: A single table with a unique index on (actor, op, key) is durable and simple. 1–5 ms overhead at p95 if indexed and the row size is small (200–800 bytes persisted: status, checksum, response hash, timestamps).

- Redis: Great for front-line dedupe with a short TTL (e.g., 1–6 hours). Pair with Postgres for durability of results.

- DynamoDB/Cosmos: Natural fit for global workloads with conditional writes. Be explicit about TTL and write capacity.

- TTL policy: Match your business risk. Stripe documents 24 hours; SQS FIFO dedup is 5 minutes; webhooks often redeliver for days. For orders and payments, 24–72 hours is sane; for ephemeral actions, 15–60 minutes is fine.

3) Result replay: do you return the same bytes or just the same effect?

- Store a response envelope: HTTP status, headers relevant to clients (cache-control, ETag), and a stable JSON body (or a hash + pointer).

- Determinism: Remove non-deterministic fields (timestamps, random IDs) or keep them stable across replays to prevent client confusion.

4) Payload conflict policy: the “second request is different” problem

- Canonical hash: Persist a hash of the canonicalized payload (sorted keys, trimmed strings, normalized decimals).

- On mismatch: Return 409 Conflict with the original response body and explain the canonical payload that “won.” Never silently accept a different mutation under the same key.

- Upgrades: If you support “idempotency + patch,” require a new key but allow linking via a parent_operation_id.

5) Concurrency control: stop races, not just duplicates

- Atomic first write: Use an INSERT … ON CONFLICT DO NOTHING (or conditional put) to claim a key before performing the effect. If you lose the race, poll the winner’s record and replay its result.

- Advisory locks: For hot keys (e.g., same cart), a short-lived advisory lock per resource prevents thundering herds without coarse global locks.

- Timeouts: If the owner of a claimed key crashes, release after a grace period and mark the record as “indeterminate,” forcing a reconciliation path.

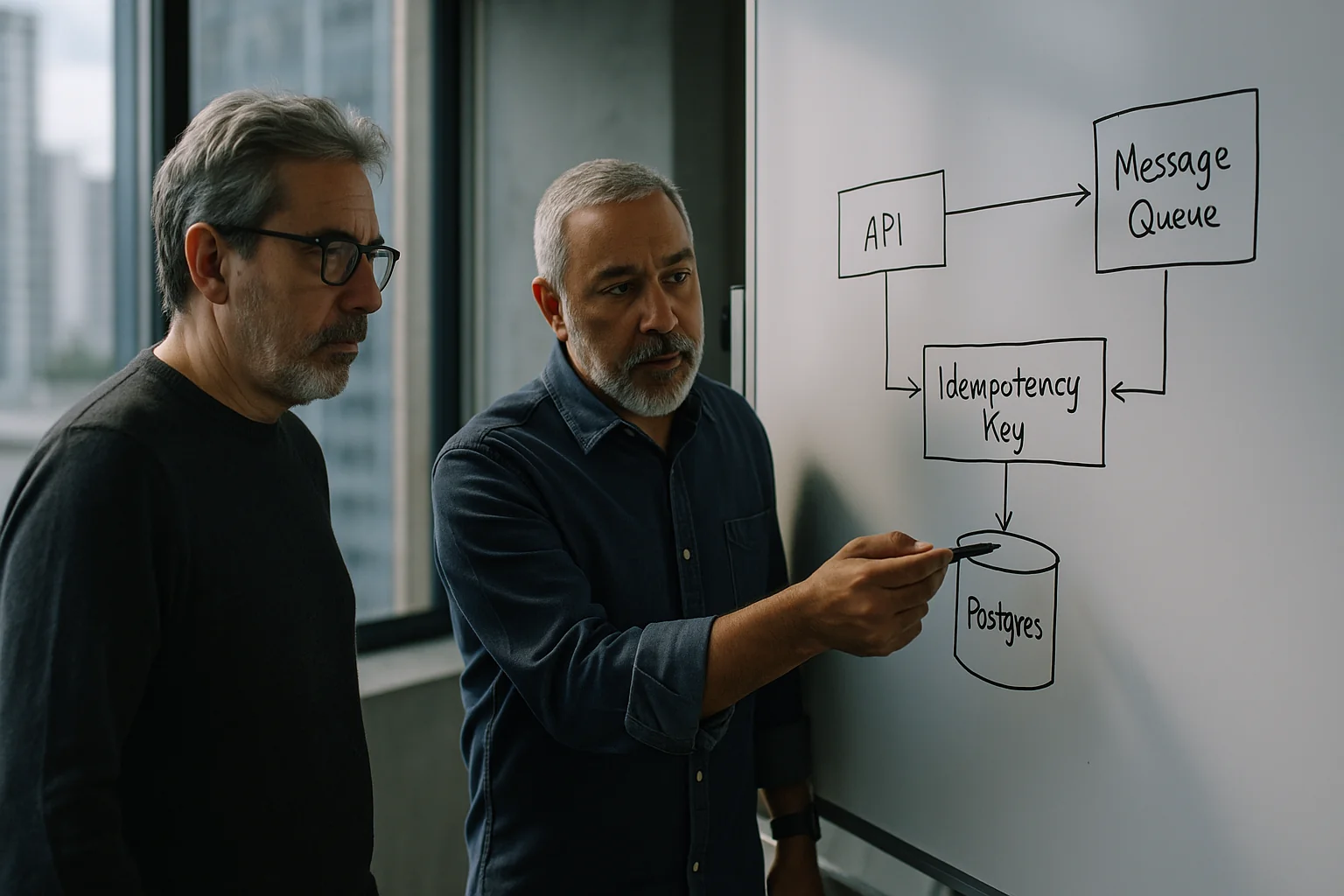

6) Side-effect isolation: the outbox/inbox pattern

- Outbox: Write the domain state change and an “event to publish” in the same transaction. A relay publishes from the outbox to Kafka/SNS and marks it sent. Prevents “updated DB but failed to publish” splits.

- Inbox: For consumers (especially webhooks), dedupe on (source, event_id) before applying state. Persist the outcome. Return 2xx only after a durable commit.

- Idempotent emails/SMS: Store a message_id and suppress duplicates within a window. Downstream vendors also retry.

7) Boundaries of “exactly-once”

- Queues: Kafka’s idempotent producer and transactions give exactly-once processing within Kafka. The moment you call an external API, you’re back to at-least-once. Design with that honesty.

- Datastores: Even within a single DB, “exactly-once” relies on your unique constraints and transaction model. Test the failure matrix (timeout after commit, process crash, network partition).

A pragmatic reference implementation

Postgres-first: boring, correct, fast enough

- Table: idempotency_keys(actor_id, op, key, payload_hash, status, response_blob, created_at, updated_at, claimed_by, expires_at). Unique index on (actor_id, op, key).

- Flow: Client sends Idempotency-Key. Server canonicalizes payload and computes payload_hash. INSERT to claim. If success, do the work; store response_blob; set status=completed. If conflict, SELECT the existing row; if payload_hash matches and status=completed, return stored response; if in-progress, poll with backoff; if hash mismatches, return 409 with winner’s response.

- Numbers: With a JSONB response under 8 KB and proper indexing, p95 extra latency of 1–3 ms in Postgres 14+ on mid-tier hardware is typical. Storage cost is ~ a few hundred bytes per request plus the response size; with a 72‑hour TTL you generally stay under single-digit GB for most SaaS APIs.

Redis acceleration: front cache, Postgres truth

- SETNX + short TTL to gate the hot path; if set succeeds, proceed and write the durable record in Postgres; if not, fetch from Postgres.

- Lua script to atomically compare payload hash and set claim markers to avoid brief races.

- Trade-off: Complexity increases, but you shave 1–2 ms at p95 for very hot endpoints. Don’t rely on Redis alone for business-critical durability.

Webhooks: inbox or it didn’t happen

- Deliveries table keyed by (provider, event_id). Unique constraint + status + business effect pointer.

- Return 200 only after the domain state transition commits. If you need long work, acknowledge quickly and hand off to a queue.

- Reordering: Use event version (e.g., seq) and process safely even if N+1 arrives before N. That means your handlers must be idempotent too.

SQS/Kafka interop: be honest about guarantees

- SQS FIFO gives ordered delivery and 5‑minute dedup. Still design consumers to handle duplicates and replays outside that window.

- Kafka: Use idempotent producers and transactions for intra-Kafka EOS. When bridging to external systems, re-enter at-least-once with an outbox and consumer-side inbox.

Handling “same key, different payload” without drama

This is where most teams break. Decide and document policy:

- Hard conflict: If hash differs, return 409 with the original response and the canonical payload that claimed the key. Log a structured audit event.

- Soft upgrade: Allow minor changes (e.g., adding a memo field). Implement a payload schema hash that ignores non-semantic fields. Requires discipline—don’t overuse.

- New intent, new key: For any material change (amount, items, address), require a fresh key with an optional parent_operation_id. Teach your SDKs to do this by default.

Observability: you can’t manage what you can’t see

- Log the envelope: actor_id, op, key, payload_hash, status, winner_node, duration_ms, replayed=true/false.

- Metrics: duplicates_blocked_per_route, in_progress_timeouts, conflict_rate, replay_rate, p95_claim_latency. If conflict_rate spikes after a deploy, you broke canonicalization.

- Tracing: Propagate operation_id through your spans. Tag downstream side effects (email_id, charge_id) to see fan-out and retries.

AI agents make idempotency non-negotiable

Agents don’t get bored; they retry. They also mutate payloads in “helpful” ways (“rounded amount to two decimals”). If you’re exposing internal tools to agents, enforce a mutation envelope:

- operation_id: Stable per intent, generated on first plan.

- intent_hash: Hash of canonical business fields (not formatting).

- capabilities_version: So you can reject old plans that don’t match current contracts.

- server-side keys only: Don’t trust agent-supplied keys from prompts or tools. Issue them per session, scoped.

Security and abuse controls

- Key length and format: 16–32 bytes of randomness (base64/hex). Reject absurd keys to prevent memory abuse.

- TTL + quotas: Enforce per-tenant limits on new keys per minute. A flood of unique keys is a DoS vector.

- Encryption at rest: Response blobs can contain PII. Treat the idempotency store like application data, not logs.

Rollout plan: 30/60/90 days

Day 0–30: choose scope, build the boring rails

- Identify the top 5 mutation endpoints by revenue and risk (payments, orders, inventory, credits).

- Implement the Postgres-first pattern with unique constraints and response replay for those routes.

- Instrument metrics and logs from day one. Block conflicting payloads with 409.

Day 31–60: wire in webhooks and side effects

- Add inbox dedupe for webhooks from your top three providers. Persist outcomes.

- Move emails/SMS to idempotent sends keyed by message_id. Suppress duplicates within 24 hours.

- Introduce outbox for domain events that fan out to Kafka/SNS. Validate the recovery path after crash/timeout.

Day 61–90: harden and scale

- Add Redis front-cache if p95 worsens after adoption. Keep Postgres as source of truth.

- Run a chaos drill: inject timeouts after commit, duplicate deliveries, and reordered events. Prove no double charges or phantom orders.

- Teach your SDKs and partner integrators to send keys consistently. Document the conflict policy publicly.

Trade-offs you should accept upfront

- Storage overhead: You’ll store a record per logical mutation for 24–72 hours. That’s cheap insurance compared to even a handful of chargebacks.

- Latency tax: Expect 1–3 ms at p95 for the claim/read path in a well-tuned relational DB. If that’s too much, you’re optimizing the wrong layer.

- Strictness costs support: Returning 409 for conflicting payloads creates tickets. That’s fine. Better a support touch than silent data corruption.

- Not everything needs a key: Pure reads and truly idempotent PUTs (set exact value) don’t need this machinery. Be selective.

What good looks like

- Every mutation route documents its operation boundary and conflict policy.

- Each response includes an operation_id so clients can correlate replays.

- Duplicates are observable: you can show a dashboard of blocked duplicates by route and tenant.

- Webhooks are safe to replay indefinitely: inbox table enforces dedupe, handlers are idempotent.

- Side effects hang off outboxes, and you can kill a worker mid-flight without double-sending.

Idempotency that survives retries, reorders, and “the second request is different” isn’t over-engineering. It’s the minimum bar for money-moving and order-taking systems in 2026. Treat it like a business requirement, not a developer preference, and your pages—and your finance team—will stay quiet.

Key Takeaways

- Idempotency is a system property anchored to a logical operation, not a request header.

- Decide scope, storage, TTL, replay strategy, conflict policy, concurrency control, and side-effect isolation up front.

- Use a durable store (Postgres/DynamoDB) with a unique constraint; Redis can accelerate but shouldn’t be your truth.

- Handle “same key, different payload” with canonical hashes and 409 Conflict; never accept silent drift.

- Adopt outbox/inbox patterns to make external side effects (events, emails, webhooks) safely idempotent.

- Instrument duplicates, conflicts, and p95 claim latency; chaos test retries and reorders before customers do.

- AI agents increase retries and payload drift; enforce a mutation envelope with stable operation IDs.